This is a guest post by Idean Salehyan.

There has been a lot of hand-wringing and debate lately as to whether or not academics are engaged enough with important policy questions (See Nicholas Kristof’s article in the New York Times and just a few responses, here and here). As this conversation was circling around the blogosphere, there was an impressive initiative to poll International Relations (IR) scholars about their views and predictions regarding foreign affairs. Such surveys have the potential to make a big splash inside and outside of academia.

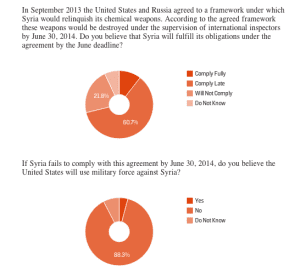

For several years, scholars at the College of William and Mary have conducted the Teaching, Research, and International Policy (TRIP) survey, which gauges IR scholars’ views of the discipline, including department and journal rankings, epistemology, and so on. This endeavor was largely inward-looking. Yet for the first time, the folks at TRIP conducted a “snap poll” of IR scholars to measure the collective wisdom of the field regarding current international events. The results of the first snap poll were recently released at Foreign Policy. It included questions on Syria, the crisis in Ukraine, and the U.S. Defense Budget. Key findings include that IR scholars do not think that Syria will comply on time (if at all) with plans to eliminate its chemical weapons; very few correctly predicted that Russia would send troops to Ukraine; and most do not believe that proposed cuts to the U.S. military budget will negatively effect national security. Additional polls are being planned, providing an extremely important tool for engaging policy makers and the general public.

First, the poll was largely representative of IR scholars as a whole. Nearly 1,000 people responded to the survey, and the characteristics of non-respondents did not differ significantly from those who answered. The poll included men, women, scholars at research institutions as well as teaching colleges, area experts, qualitative researchers, and quantitative ones. Thus, for the first time we know how IR scholars in general feel about timely policy issues. Why is this important? While op-ed articles and blogs are useful means of engagement, these voices are self-selected and we do not know if their views reflect the general academic consensus (if there is one, but that itself is something we should like to know) or represent an extreme opinion. In a media environment in which hyperbole directs traffic, incorporating views from a broad swathe of experts can provide an important ‘check’ on those who merely scream the loudest.

Second, while ‘public’ opinion polls provide useful information to policy makers, ‘expert’ opinion polls offer a different set of views. I’m not claiming that the views of experts are inherently better than then views of the average American, but scholars conduct research on these policy issues, spend more time digesting the news, teach their students about world affairs, and are more likely to have traveled abroad. Such expertise is why news outlets frequently poll economists about their views on economic policy. Informed opinion doesn’t always lead to correct assessments—only 14% of IR scholars believed that Russia would invade the Ukraine—but on complex issues, polls of experts can provide insights on topics that the general public does not consider on a daily basis.

Third, such polls are important for academia itself. Scholarly research is often a long process that involves data collection, field research, interviews, statistical analysis, and writing papers. That is, scholars have the luxury of thinking long and hard about a particular issue. These snap polls require them to use the knowledge they have acquired over years of study to answer questions about what is going on right now. While answering a survey is not a substitute for deep engagement with foreign policy, having to think about how one would respond to questions of the day is a useful exercise. Getting scholars to apply their knowledge for the purposes of a survey, I hope and expect, will encourage them to be more publically involved in general.

If anything, these polls will generate discussion within the discipline about why we collectively think the way we do, why there is or is not consensus on key issues, and why we correctly or incorrectly make predictions about the future. In looking at the results of the current snap poll, I can see several topics for debate. Why is there a general lack of agreement on military intervention in Syria? Why did we fail to anticipate the Russian invasion of Ukraine? Why does the academic consensus regarding military spending (it is too high) differ so greatly from the views of the general public? If these conversations make IR scholars more self-reflective about their own work as they engage with policymakers, so the better.

There are many ways that scholars use their expertise to shed light on current foreign policy questions. Individually and collectively, the International Relations field plays an important role in shaping the debate in Washington and around the world. The TRIP snap polls offer another tool in the toolkit for applying the opinion and analysis of experts to a wide range of topics. It remains to be seen how the policy community will use these polls, but it cannot be said that scholars do not chime in on topics that matter.

Idean Salehyan is an Associate Professor of Political Science at the University of North Texas and is a member of the advisory board of the Teaching, Research and International Policy survey.

Joshua Busby is a Professor in the LBJ School of Public Affairs at the University of Texas-Austin. From 2021-2023, he served as a Senior Advisor for Climate at the U.S. Department of Defense. His most recent book is States and Nature: The Effects of Climate Change on Security (Cambridge, 2023). He is also the author of Moral Movements and Foreign Policy (Cambridge, 2010) and the co-author, with Ethan Kapstein, of AIDS Drugs for All: Social Movements and Market Transformations (Cambridge, 2013). His main research interests include transnational advocacy and social movements, international security and climate change, global public health and HIV/ AIDS, energy and environmental policy, and U.S. foreign policy.

0 Comments