A common argument made in favor of the use of robotics to deliver (lethal) force is that the violence used is mediated in such a way that it naturally de-escalates a situation. In some versions, this is due to the fact that the “robot doesn’t feel emotions,” and so...

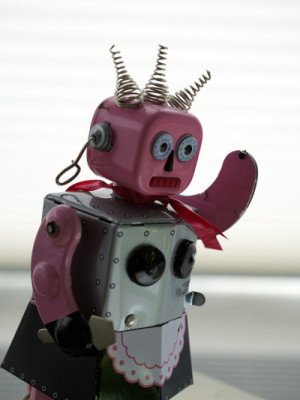

Improvised Explosive Robots

read more