What is the name of the journal article (or book) and what are its coordinates? Miray Philips. 2025. “The Social Construction of Christian Persecution through Quantification in International Religious Freedom Advocacy.” Sociology of Religion....

What is the name of the journal article (or book) and what are its coordinates? Miray Philips. 2025. “The Social Construction of Christian Persecution through Quantification in International Religious Freedom Advocacy.” Sociology of Religion....

Maybe the problem isn’t that scholars don’t know how to speak to U.S. foreign-policy makers, but rather that U.S foreign-policy makers don’t know how to engage with scholarship?

Simple steps to promote qualitative research in journals It happened again. After months of waiting, you finally got that "Decision" email: Rejection. That's not so bad, it happens to everyone. But...

This is a guest post by Simon Frankel Pratt. He is a lecturer in the School of Sociology, Politics, and International Studies at the University of Bristol. In the social sciences, research and data...

Last night, I taught another session of our Dissertation Proposal Workshop class, and the topic was the methodology section of one's proposal. That is, how am I going to research this question and how do I justify the choices I made? This is after going through the other pieces--the question, the proposed answer, what other folks have said about this or have said about other stuff that you want to bring to this project, the theory, and the hypotheses. How does one test the hypotheses was the question du jour (or nuit). Before I start, to be clear, no one should expect...

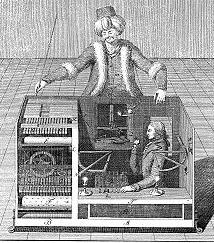

Amazon created a platform called Mechanical Turk that allows Requesters to create small tasks (Human Intelligence Tasks or HITs) that Workers can perform for an extremely modest fee such as 25 or 50 cents per task.* Because the site can be used to collect survey data, it has become a boon for social scientists interested in an experimental design to test causal mechanisms of interest (see Adam Berinsky's short description here). The advantage of Mechanical Turk is the cost. For a fraction of the expense it costs to field a survey with Knowledge Networks/GfK, Qualtrics, or other survey...

“Interpretive and Relational Research Methodologies” A One-Day Graduate Student Workshop Sponsored by the International Studies Association-Northeast Region 9 November, 2013 • Providence, Rhode Island International Studies has always been interdisciplinary, with scholars drawing on a variety of qualitative and quantitative techniques of data collection and data analysis as they seek to produce knowledge about global politics. Recent debates about epistemology and ontology have advanced the methodological openness of the field, albeit mainly at a meta-theoretical level. And while interest in...

Is the society depicted in this film historically accurate? Let's perform a social-network analysis! Here's a helpful hint: the "realism" of social networks in the Iliad, Beowulf, and the Tain tell us squat, zero, nothing, zilch, not a bit about their historicity.From the New York Times (h/t Daniel Solomon): Archaeological evidence suggests that at least some of the societies and events in such stories did exist. But is there other evidence, lurking perhaps within the ancient texts themselves? To investigate that question, we turned to a decidedly modern tool: social-network analysis. In...

Some commentators have suggested posts that pose questions to our readers. I think that the discussion on Peter Henne's piece, "A Modest Defense of Terrorism Studies," provides just such an opportunity.In Remi Brulin's most recent comment, she asks:... I am very much interested in better understanding why Peter (and others of course) do believe that the distinction between state and non-state "terrorism" is so important and necessary from an analytical point of view. For my part, I would tend to think that it could in fact add a lot to our understanding of "terrorism", of the non-state or...

I spent last week doing "field research" - that is, participant-observation in one of the several communities of practice whose work I'm following as part of my new book project on global norm development. In this case, the norm in question is governance over developments in lethal autonomous robotics, and the community of practice is individuals loosely associated with the Consortium on Emerging Technologies, Military Operations and National Security. CETMONS is an epistemic network comprised of six ethics centers whose Autonomous Weapons Thrust Group collaboratively published an important...

This is a guest post from Tanisha Fazal, a professor of political science at Columbia University, and Jessica Martini, a human rights and international trade attorney based in New York City.To conduct research on terrorism and insurgency, it’s best to be able to talk to people. Combing through incident reports is helpful, but often an informal conversation over a cup of tea is as, if not more, illuminating. But according to ban on providing “material support” (18 United States Code (U.S.C.) 2339B), buying a cup of tea for a terrorist can land you in [US] jail. In 1996 the Antiterrorism...

A standard critical argument in my field looks something like this:1. Phenomenon X involves A assumptions about the world;2. Approach Y contains assumptions inconsistent with A; therefore3. Y cannot be used to understand X.In some instances, and given some specific conditions, this can be a persuasive argument. But it is clearly not a priori true; articulated in the form above, I submit, it is a logical fallacy--one often found alongside, but distinct from, genetic fallacies.Thus, I will call this the "own-termism fallacy" until someone finds a better--or, at least, preexisting--name for...